By: Eric Siegel, Founder, Predictive Analytics World & Executive Editor, The Predictive Analytics Times

Would skipping breakfast kill you? Not necessarily—but confusing correlation and causation might. Find out why in this article, originally published in Quartz and adapted from Eric Siegel’s recently-released Revised and Updated, edition of Predictive Analytics: The Power to Predict Who Will Click, Buy, Lie, or Die—available in paperback, e-book, and audiobook.

The results are in: Skipping breakfast is associated with heart disease. But that doesn’t necessarily mean breakfast deserves its reputation as the most important meal of the day.

Harvard University medical researchers have concluded that American men between the ages of 45 to 82 who skip breakfast showed a 27% higher risk of coronary heart disease over a 16-year period. However, rather than directly affecting health, eating breakfast may more simply be a proxy for lifestyle.

People who skip breakfast tend to lead more stressful lives. Participants in the study who skipped breakfast “were more likely to be smokers, to work full time, to be unmarried, to be less physically active, and to drink more alcohol,” Harvard researchers report. In other words, the link between breakfast and health may not be causal.

This is a perfect example of why a certain scientific mantra is often repeated: Correlation does not imply causation. Yet data scientists often confuse the two, succumbing to the temptation to over-interpret. And that can lead us to make some really bad decisions—which could put a damper on the enormous value of deriving predictions from data.

Predictive analytics draws on the growing availability of data to determine which factors indicate the most likely outcomes for people ranging from medical patients to criminals to employees. This practice has major implications for improving operations in healthcare, financial services, law enforcement, government, and manufacturing, among other industries.

Yet there’s a real risk that advances in predictive analytics will be hampered by our overly interpretive minds. Stein Kretsinger, founding executive of Advertising.com, offers a classic example. In the early 1990s, as a graduate student, Stein was leading a medical research meeting, assessing the factors that determine how long it takes to wean a person off a respirator. This was before the advent of PowerPoint, so Stein displayed the factors, one at a time, on overhead transparencies. The team of healthcare experts nodded their heads, offering one explanation after another for the relationships shown in the data.

But after going through several transparencies, Stein realized that he’d been placing them with the wrong side up—thus displaying mirror images of his graphs that depicted the opposite of the true relationships between data points. After he flipped them to the correct side, the experts seemed just as comfortable as before, offering new explanations for what was now the very opposite effect of each factor.

In other words, our thinking is malleable. People can readily find underlying theories to explain just about anything.

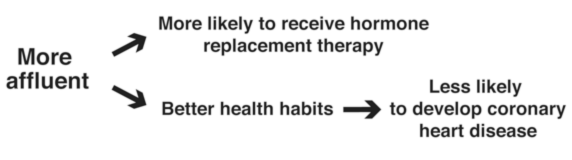

Take the incident of a published medical study that discovered that women who happened to receive hormone replacement therapy showed a lower incidence of coronary heart disease. Could it be that a new treatment for this disease had been discovered?

Later, a proper control experiment disproved this false conclusion. Instead, the current thinking is that more affluent women had access to the hormone replacement therapy, and these same women had better health habits overall. This sort of follow-up analysis is critical so that, in this case, women are not needlessly prescribed hormone replacement therapy.

Businesses also mistake effect for cause….

CONTINUE READING: Access the complete article in Quartz, where it was originally published

Adapted with permission of the publisher from Predictive Analytics: The Power to Predict Who Will Click, Buy, Lie, or Die, Revised and Updated Edition (Wiley, January 2016) by Eric Siegel, Ph.D. Siegel is the founder of the Predictive Analytics World conference series—which covers both business and government deployment—executive editor of The Predictive Analytics Times, and a former computer science professor at Columbia University. For more information about predictive analytics, see the Predictive Analytics Guide.

Adapted with permission of the publisher from Predictive Analytics: The Power to Predict Who Will Click, Buy, Lie, or Die, Revised and Updated Edition (Wiley, January 2016) by Eric Siegel, Ph.D. Siegel is the founder of the Predictive Analytics World conference series—which covers both business and government deployment—executive editor of The Predictive Analytics Times, and a former computer science professor at Columbia University. For more information about predictive analytics, see the Predictive Analytics Guide.

The Machine Learning Times © 2026 • 1221 State Street • Suite 12, 91940 •

Santa Barbara, CA 93190

Produced by: Rising Media & Prediction Impact